Feature Selection in Data Mining

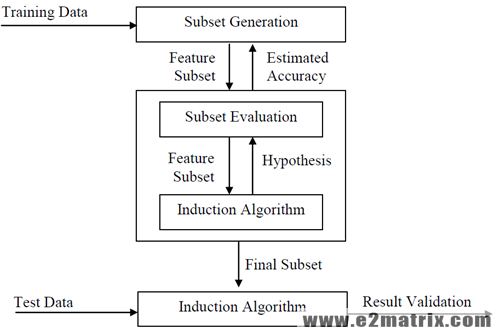

In Machine Learning and statistics, feature selection, also known as the variable selection is the operation of specifying a division of applicable features for apply in form of the model formation. The center basis after operating an element collection approach so as to the data hold a number attributes. It is an algorithm can be seen as the grouping of a search procedure for proposes original attribute subsets, along with an estimate calculate which make the different attribute subsets. A feature selection algorithm can be seen as the combination of a search technique for proposing new feature subsets, along with an evaluation measure which scores the different feature subsets. The simplest algorithm is to test each possible subset of features finding the one which minimizes the error rate. This is an exhaustive search of the space and is computationally intractable for all but the smallest of feature sets. The choice of evaluation metric heavily influences the algorithm, and it is these evaluation metrics which distinguish between the three main categories of feature selection algorithms: wrappers, filters and embedded methods. The simplest algorithm is to examine each possible subset of features finding the one which minimizes the error rate. This is an extensive search of the space and is computationally intractable for all but the minimum of feature sets.

Feature Selection Models

Feature Selection, generates advanced features for the purposes of creative features, while this selection returns a distribution of the features. These methods are often used in a domain where there are numbers of features and rather only some samples. Unnecessary features are individuals who give no more information than the present take features, and unconnected features provide no useful data in some situation. Attribute selection methods are a subset of the additional common field of attribute percentage. Feature selection approaches give the three main goods when well-known analytical approaches: Feature selection is also helpful as a segment of the input examination operation, as it describes which features is the benefit for the forecast, and how these features are associated. This is also helpful because the element of the data analysis procedure, like explain which features are important for prediction. Filter methods use a proxy measure instead of the error rate to score a feature subset. This measure is chosen to be fast to compute, whilst still capturing the usefulness of the feature set. Feature selection models CFS (Correlation Feature Selection) using the Search technique as Best First.

1) Correlation Feature Selection (CFS)

CFS (Correlation Feature Selection) is an algorithm. CFS quickly identifies and screens irrelevant, redundant, and noisy features, and identifies relevant features as long as their relevance does not powerfully depend on other features. On natural domains, CFS typically eliminated well over half the features. The majority of classification accuracy using the reduced feature set different or improved accuracy using the complete feature set. Feature selection in Machine Learning performance cases where some features were eliminated which were highly analytical of very small areas of the instance space

2) Consistency Based Feature Selection (CBF)

For identification Feature Selection is used to explore an optimal subset of appropriate features. The general accuracy of identification is growing while the input size is decreased and the comprehensibility is increased. Feature selection techniques contain two main features: estimations of a candidate feature subset and find through the feature space. A number of methods are using to examine the goodness of feature areas. Inconsistency measure according to which a feature subset is inconsistent if there exists at least set of instances with the same feature uses but with unusual categories labels. With the help of this method, instability calculates with other instability and learning unusual search plan policies such as comprehensive, total, heuristic and arbitrary explore that can register the estimations. Performance of an actual learning to testing the pros and cons of these search approaches, give some directions on selecting a search approach.

3) Information Gain (IG)

In order to proposition and machine learning, Information Gain is a synonym for Kullback–Leibler deviation. But, in the context of decision trees, the word is sometimes used synonymously with correlative statistics, which are the expectation uses of the Kullback–Leibler separation. Datasets for analysis may contain hundreds of attributes, many of which may be irrelevant to the mining task, or redundant. Although it may be possible for a domain expert to pick out some of the useful attributes, this can be a difficult and time-consuming task, especially when the behavior of the data is not well known. Leaving out relevant attributes or keeping irrelevant attributes may be detrimental, causing confusion for mining algorithm employed. In machine learning; these ideas can be used to explain a favored series of features to examine to most fast small along the conditions.