Data Mining Tools

Data mining has a wide number of applications ranging from marketing and advertising of goods, services or products, artificial intelligence research, biological sciences, crime investigations to high-level government intelligence. Due to its widespread use and complexity involved in building data mining applications, a large number of Data mining tools have been developed over decades. Every tool has its own advantages and disadvantages.

Within data mining, there is a group of tools that have been developed by a research community and data analysis enthusiasts; they are offered free of charge using one of the existing open-source licenses. An open-source development model usually means that the tool is a result of a community effort, not necessarily supported by a single institution but instead the result of contributions from an international and informal development team. This development style offers a means of incorporating the diverse experiences

Data mining provides many mining techniques to extract data from databases. Data mining tools predict future trends, behaviors, allowing business to make proactive, knowledge-driven decisions.

The development and application of data mining algorithms require use of very powerful software tools. As the number of available tools continues to grow the choice of the most suitable tool becomes increasingly difficult.

The top six open source tools available for data mining are briefed as below.

- WEKA

Waikato Environment for Knowledge Analysis. Weka is a collection of machine learning algorithms for data mining tasks. These algorithms can either be applied directly to a dataset or can be called from your own Java code. The Weka (pronounced Weh-Kuh) workbench contains a collection of several tools for visualization and algorithms for analytics of data and predictive modeling, together with graphical user interfaces for easy access to this functionality.

Technical Specification:

- First released in 1997.

- Latest version available is WEKA 3.6.11.

- Has GNU general public license.

- Platform independent software.

- Supported by Java

- Can be downloaded from www.cs.waikato.ac.

General Features

- Weka is a Java-based open source tool data mining tool which is a collection of many data mining and machine learning algorithms, including pre-processing of data, classification, clustering, and association rule extraction

- Weka provides three graphical user interfaces i.e. the Explorer for exploratory data analysis to support preprocessing, attribute selection, learning, visualization, the Experimenter that provides an experimental environment for testing and evaluating machine learning algorithms, and the Knowledge Flow for new process model inspired interface for the visual design of KDD process. A simple Command-line explorer which is a simple interface for typing commands is also provided by weka.

Specialization:

- Weka is best suited for mining association rules.

- Stronger in machine learning techniques.

- Suited for Machine Learning.

Advantages

- It is also suitable for developing new machine learning schemes

- Weka loads data file in formats of ARFF, CSV, and C4.5, binary. Though it is open source, Free, Extensible, Can be integrated into other java packages.

Limitation

- It lacks proper and adequate documentation and suffers from “Kitchen Sink Syndrome” where systems are updated constantly.

- Worse connectivity to Excel spreadsheet and non-Java based databases.

- CSV reader not as robust as in Rapid Miner.

- Not as polished.

- Weka is much weaker in classical statistics.

- Does not have the facility to save parameters for scaling to apply to future datasets.

- Does not have automatic facility for Parameter optimization of machine learning/statistical methods

- KEEL

Knowledge Extraction based on Evolutionary Learning is an application package of machine learning software tools. KEEL is designed for providing a solution to data mining problems and assessing evolutionary algorithms. It has a collection of libraries for preprocessing and post-processing techniques for data manipulating, soft-computing methods in the knowledge of extracting and learning and providing scientific and research methods.

Technical Overview

- First released in 2004.

- Latest version available is KEEL 2.0.

- Licensed by GNU, general public license.

- Can run on any platform.

- Supported by Java language.

- Can be downloaded from www.sci2s.ugr.es/keel.

Specialization

- Keel is a software tool to assess evolutionary algorithms for Data Mining problems.

- Machine learning tool.

Advantages

- It includes regression, classification, clustering, and pattern mining and so on.

- It contains a big collection of classical knowledge extraction algorithms, preprocessing techniques (instance selection, feature selection, discretization, imputation methods for missing values etc.), Computational Intelligence based learning algorithms, including evolutionary rule learning algorithms based on different approaches (Pittsburgh, Michigan and IRL), and hybrid models such as genetic fuzzy systems, evolutionary neural networks etc.

Limitation

- Efficiency is restricted by the number of algorithms it supports as compared to other tools.

- R

Revolution is a free software programming language and software environment for statistical computing and graphics. The R language is widely used among statisticians and data miners for developing statistical software and data analysis. One of the R’s strengths is the ease with which well-designed publication-quality plots can be produced, including mathematical symbols and formulae where needed.

Technical Specification

- First released in 1997

- Latest version Available is 3.1.0

- Licensed by GNU General Public License

- Cross Platform

- C, Fortran, and R

- r-project.org

General Features

- The R project is a platform for the analysis, graphics, and software development activities of data miners and related areas.

- R is a well-supported, open source, a command line is driven, statistics package. There are hundreds of extra “packages” freely available, which provide all sorts of data mining, machine learning, and statistical techniques.

- It allows statisticians to do very intricate and complicated analyses without knowing the blood and guts of computing systems

Specification

- It has a large number of users, in particular in the fields of bioinformatics and social science. It is also a freeware replacement for SPSS.

- Suited for Statistical Computing.

Advantages

- Very extensive statistical library.

- It is a powerful elegant array language in the tradition of APL, Mathematica, and MATLAB, but also LISP/Scheme.

- Ability to make a working machine learning program in just 40 lines of code

- Numerical programming is better integrated with R

- R has better graphics.

- R is more transparent since the Orange are wrapped C++ classes.

- Easier to combine with other statistical calculations.

- Import and export of data from the spreadsheet are easier in R, the spreadsheet is stored in a data frames that the different machine learning algorithms are operating on.

- Programming in R really is very different, you are working on a higher abstraction level, but you do lose control over the details.

Limitation:

- Less specialized towards data mining.

- There is a steep learning curve unless you are familiar with array languages

- KNIME

Konstanz Information Miner is an open source data analytics, reporting and integration platform. It has been used in pharmaceutical research but is also used in other areas like CRM customer data analysis, business intelligence, and financial data analysis. It is based on the Eclipse platform and, through its modular API, and is easily extensible. Custom nodes and types can be implemented in KNIME within hours thus extending KNIME to comprehend and provide first-tier support for highly domain-specific data format.

Technical Specification

- Released on 2004.

- Latest version available is KNIME2.9

- Licensed By GNU General Public License

- Compatible with Linux, OS X, Windows

- Written in java

- knime.org

General Features

- Knime, pronounced “naim”, is a nicely designed data mining tool that runs inside the IBM’s Eclipse development environment.

- It is a modular data exploration platform that enables the user to visually create data flows (often referred to as pipelines), selectively execute some or all analysis steps, and later investigate the results through interactive views on data and models.

- The Knime base version already incorporates over 100 processing nodes for data I/O, preprocessing and cleansing, modeling, analysis and data mining as well as various interactive views, such as scatter plots, parallel coordinates, and others.

Specification

- Integration of the Chemistry Development Kit with additional nodes for the processing of chemical structures, compounds, etc.

- Specialized for Enterprise reporting, Business Intelligence, data mining.

Advantages

- It integrates all analysis modules of the well-known. Weka data mining environment and additional plugins allow R-scripts to be run, offering access to a vast library of statistical routines.

- It is easy to try out because it requires no installation besides downloading and unarchiving.

- The one aspect of KNIME that truly sets it apart from other data mining packages is its ability to interface with programs that allow for the visualization and analysis of molecular data.

Limitations

- Have only limited error measurement methods.

- RAPIDMINER

It is a software platform developed by the company of the same name that provides an integrated environment for machine learning, data mining, text mining, predictive analytics and business analytics. It is used for business and industrial applications as well as for research, education, training, rapid prototyping, and application development and supports all steps of the data mining process. Rapid Miner uses a client/server model with the server offered as Software as a Service or on cloud infrastructures.

Technical specification

- Released on 2006

- Latest version available is Rapid miner 6.

- Licensed by AGPL Proprietary

- Cross-platform i.e. can be installed on any operating system

- Language Independent

- Can be downloaded from www.rapidminer.com.

General Features

- Rapid miner is an environment for machine learning and data mining processes.

- It represents a new approach to design even very complicated problems by using a modular operator concept which allows the design of complex nested operator chains for a huge number of learning problems.

- The rapid miner uses XML to describe the operator trees modeling knowledge discovery process.

- It has flexible operators for data input and output file formats.

- It contains more than 100 learning schemes for regression classification and clustering analysis of data.

- Rapid miner supports about twenty-two file formats.

- Rapid Miner has a lot of functionality, is polished and has good connectivity.

- Rapid Miner includes many learning algorithms from WEKA.

- Solid and complete package.

- It easily reads and writes Excel files and different databases.

- You program by piping components together in a graphic ETL workflows.

- If you set up illegal workflows Rapid Miner suggest Quick Fixes to make it legal.

Specialization

- Rapid Miner provides support for most types of databases, which means that users can import information from a variety of database sources to be examined and analyzed within the application.

- Specialized for Business solutions that include predictive analysis and statistical computing.

Advantages

- Has the full facility for model evaluation using cross-validation and independent Validation sets.

- Over 1,500methods for data integration, data transformation, analysis and, modeling as well as visualization – no other solution on the market offers more procedures and therefore more possibilities of defining the optimal analysis processes.

- Rapid Miner offers numerous procedures, especially in the area of attribute selection and for outlier detection, which no other solution offers.

Limitations

- Rapid Miner is the data mining software package that is most suited for people who are accustomed to working with database files, such as in academic settings or in business settings. The reason for this is that the software requires the ability to manipulate SQL statements and files.

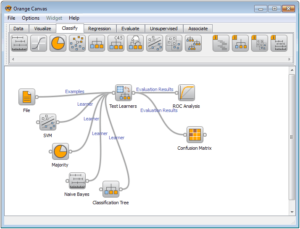

- ORANGE

Orange is a component-based data mining and machine learning software suite, featuring a visual programming front-end for explorative data analysis and visualization, and Python bindings and libraries for scripting. It includes a set of components for data preprocessing, feature scoring and filtering, modeling, model evaluation, and exploration techniques. It is implemented in C++ and Python. Its graphical user interface builds upon the cross-platform framework

Technical Requirements

- Developed in 2009.

- Latest version available is Orange 2.7

- Licensed by GNU General Public License

- Compatible with Python, C++, C.

- Can be downloaded from www.orange.biolab.si

General Features

- Orange is a component-based data mining and machine learning software suite.

- It includes a set of components for data preprocessing, feature scoring and filtering, modeling, model evaluation, and exploration techniques.

- Data mining in Orange is done through visual programming or Python scripting.

Specialization

- Open source data visualization and analysis for novice and experts.

- It contains components for machine learning and add-ons for bioinformatics and text mining. Along with its also packed with features for data analytics.

- Specialized for data visualization along with mining.

Advantages

- It is an open source data mining package build on Python, NumPy, wrapped C, C++, and Qt.

- Works both as a script and with an ETL workflow GUI.

- The shortest script for doing training, cross-validation, algorithms comparison and prediction.

- Orange the easiest tool to learn.

- Cross-platform GUI.

- Orange is written in python hence is easier for most programmers to learn.

- Has better debugger.

- Scripting data mining categorization problems are simpler in Orange.

- Orange does not give optimum performance for association rules.

Limitations

- Not super polished.

- The install is big since you need to install QT.

- A limited list of machine learning algorithms.

- Machine learning is not handled uniformly between the different libraries.

- Orange is weak in classical statistics; although it can compute basic statistical properties of the data, it provides no widgets for statistical testing.

- Reporting capabilities are limited to exporting visual representations of data models.

Recommended Posts

Data Mining Research Guidance and Thesis Topics

04 Jul 2018 - Data Mining

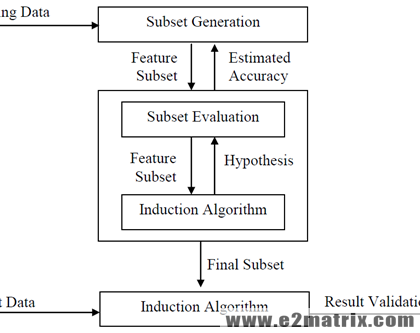

Feature Selection in Data Mining

06 Feb 2018 - Big Data, Data Mining, Machine Learning, Text Mining, Weka

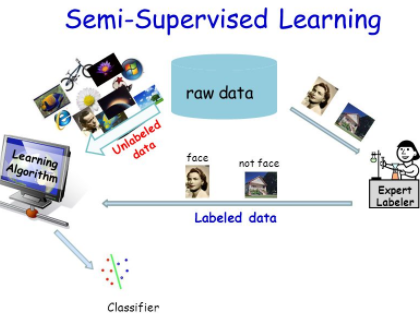

Semi-Supervised Learning Models

25 Jan 2018 - Data Mining, Machine Learning, Text Mining, Weka